How We Built Dr. Binary, Part 1: Beating the Superficial-Features Trap

How We Built Dr. Binary, Part 1: Beating the Superficial-Features Trap

This is the first post in a series on the engineering decisions behind Dr. Binary, our platform for making binary analysis accessible to non-experts using LLMs. Each post in the series tackles one specific failure mode of naive LLM-powered binary analysis and walks through how we addressed it.

Part 1 is about the most fundamental failure mode of all: LLMs evaluate binaries by their most obvious features and stop there.

The Problem with Binary Analysis Today

Binary analysis — examining compiled programs, firmware images, and other artifacts where source code is unavailable — has long been the domain of a small group of specialists. Reverse engineers spend years learning to read disassembly, recognize compiler patterns, and trace data flow through tens of thousands of functions. The barrier to entry is steep, and the talent pool is shallow.

LLMs felt like an obvious lever. Give a model access to disassembly and let it explain what's going on. The hope: democratize binary analysis so that developers, security teams, and IT staff without deep RE backgrounds can answer questions like "is this firmware backdoored?" or "is this third-party binary doing what it claims?"

The reality has been more complicated.

Why Naive LLM-Powered Binary Analysis Fails

LLMs cannot read binary code directly. The natural fix is to wrap them with reverse engineering tools — objdump, Ghidra scripts, radare2, and the like — and let the model query the binary through those interfaces. Even with that scaffolding, performance on binary analysis tasks has been hit or miss.

The team at Quesma documented this clearly in their writeup on BinaryAudit, and we observed the same pattern in our own evaluations. The shape of the failure is consistent and specific: LLMs grade binaries on their most obvious features.

Concretely, when handed a binary and a question like "does this contain a backdoor?", a vanilla LLM-with-tools agent tends to:

- Run

stringsand grep for obvious indicators — shell paths like/bin/sh, hardcoded IPs, suspicious URLs, debug strings — and treat their absence as evidence of safety. - Inspect a handful of functions chosen by name or by surface heuristic — typically less than 1% of the total — before declaring a verdict.

- Stop following cross-references the moment a story sounds plausible, instead of chasing data flow to where it actually originates.

- Produce a verdict that reads as authoritative because it is written in the voice of an expert, not because it was reached by an expert process.

This is the analytical equivalent of glancing at the cover of a book and writing the review. Real binary analysis often requires finding a needle in a haystack: a single suspicious comparison buried inside one of thousands of functions, an authentication bypass reachable only through a specific sequence of calls, a routine that looks innocuous in isolation but is wired into something dangerous three call-sites away. The needle is rarely in the part of the binary that surface-level features advertise.

The deeper issue is that the task has two distinct components, and LLMs handle them very differently:

- A deterministic, exhaustive part. You need to actually look at every function, every cross-reference, every string. There are no shortcuts. This is where domain expertise — knowing what to look for and where to look — has historically been irreplaceable.

- An exploratory, judgment-driven part. Once candidates are surfaced, you need to reason about intent, context, and significance. This is genuinely hard, open-ended work where LLMs shine.

Vanilla LLM agents collapse these two parts into one and let the model decide how much effort to spend on each. Predictably, they spend almost none on the first and jump straight to the second — because choosing where to look is itself an open-ended problem, and the model has no built-in sense of "I have not yet looked at enough of this binary to have an opinion." So it forms one anyway, anchored on whatever the most obvious features happened to come up first.

Dr. Binary's Exhaustive Analysis Tool

The fix is not to ask the LLM to be more thorough. It is to stop letting the LLM choose how thorough to be.

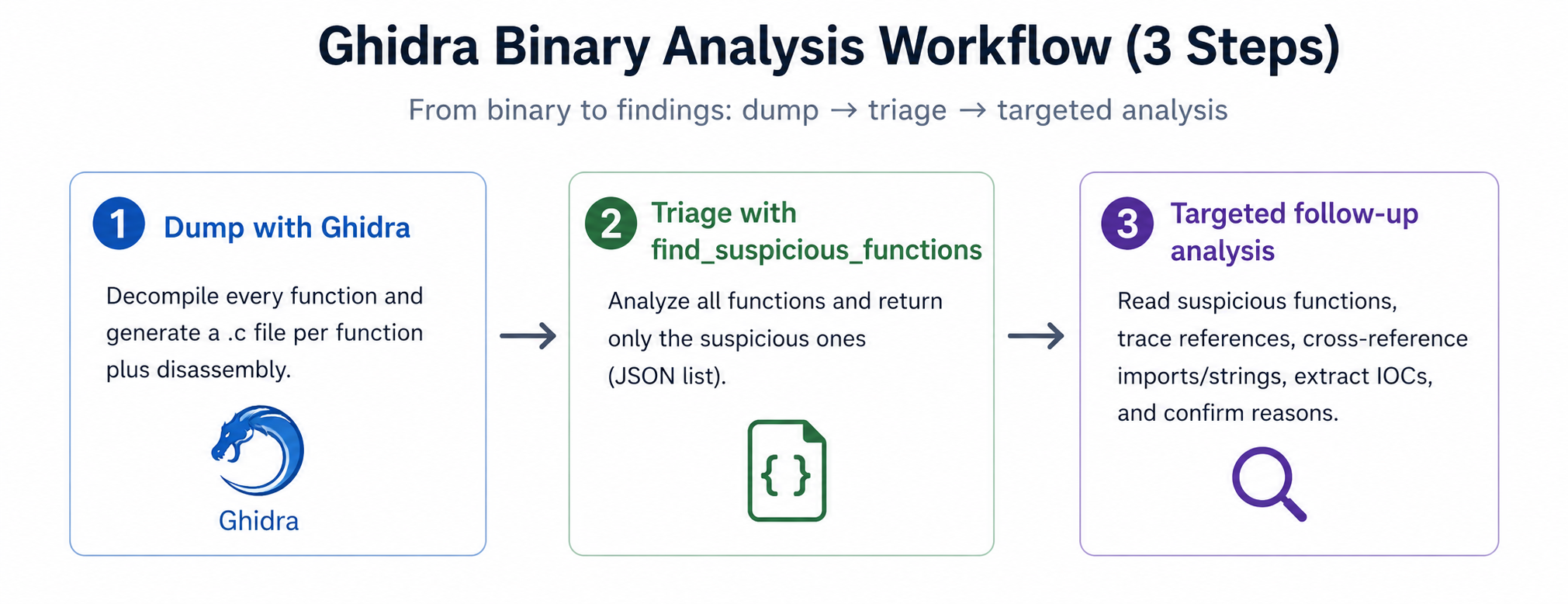

Dr. Binary ships a dedicated exhaustive analysis tool that processes the entire binary up front, before the LLM is asked to draw any conclusions. Every function gets disassembled and decompiled. Every cross-reference is enumerated. Every string, imported symbol, exported symbol, and call-graph edge is extracted and indexed. The output is a structured representation of the complete binary — not a search problem the model has to solve by guessing where to look, but a fully indexed corpus it can query precisely.

This changes what the LLM is doing in a fundamental way.

In the naive setup, the model has to pick which functions to look at. That picking step is where the laziness lives — every function the model declines to open is a function it has implicitly judged unworthy of attention, based on nothing more than its name or a surface heuristic. Multiply that across thousands of functions, and the model has effectively decided most of the answer before it has read any of the binary.

With exhaustive analysis, the picking step does not exist as a place where the model can cut corners. The full structure of the binary is already laid out. The model's queries are no longer "should I look at this function?" but "what does this function do, given that I already know what calls it and what it calls?" Coverage is no longer a thing the LLM has to opt into. It is the default.

The second-order effect is that the quality of the LLM's reasoning improves, because it is now reasoning from comprehensive evidence rather than from a self-selected highlight reel. When the model says "I see no evidence of a backdoor in this binary," that statement is now backed by a survey of the whole binary, not by the absence of /bin/sh in strings output. When it does flag something suspicious, it can actually point at where the suspicious thing is reachable from, because the call-graph is already in front of it.

This is the same model, with the same prompts, doing better work — because the work environment no longer rewards skipping the work.

Implementation: One Function at a Time

The high-level idea — process every function in the binary up front — invites an obvious cost question. There are often thousands of functions in a single binary. What does it actually take to look at all of them?

The simplest possible implementation is to hand every function, one at a time, to a cheap model and let it judge whether anything is suspicious. This works, but it is slow if you do it serially. The natural optimization is to batch multiple functions into one query and amortize the per-request overhead.

We tried. It does not work, for two specific reasons:

-

Result coverage drops. When you query the model about N functions in one batch, you reliably get back fewer than N answers — no matter how strict the response schema, no matter how many "respond about every function" instructions you add to the prompt. Some functions silently disappear from the output. The longer the batch, the worse the gap.

-

Per-function quality drops. Even when the model does respond about a given function, the analysis is shallower than it would have been in isolation. The model gets distracted across the batch and misses problems it would have caught one at a time.

Both effects push in the same direction: batching trades correctness for throughput, and on a needle-in-a-haystack task, that is the wrong trade.

So we landed on the simpler architecture, made fast in a different way: GPT-5-nano as the first-layer filter, one function per request, fired in parallel. OpenAI's high RPM and TPM limits let parallelism do the work that batching was supposed to do. We keep the per-function focus that single-function prompting gives us, and we keep wall-clock cost reasonable by fanning out instead of concatenating.

This is deliberately the dumbest, most parallel layer in the stack. Its job is coverage, not judgment — produce a structured, comprehensive view of the binary that the higher-level reasoning can operate on without having to do the surveying itself.

An example of how it works

In the lighttpd-backdoor-detect-proc-obfuscated task, the shell command is constructed via a series of byte assignments:

+static __attribute__((noinline)) void li_execute_command(liConnection *con, GList *l) {

+ liHttpHeader *hh;

+ char *cmd = NULL;

+

+ hh = (liHttpHeader*) l->data;

+ cmd = LI_HEADER_VALUE(hh);

+

+ if (strlen(cmd) > 0) {

+ volatile char sh_path[8];

+ sh_path[0] = '/';

+ sh_path[1] = 'b';

+ sh_path[2] = 'i';

+ sh_path[3] = 'n';

+ sh_path[4] = '/';

+ sh_path[5] = 's';

+ sh_path[6] = 'h';

+ sh_path[7] = '\0';

+ volatile char dash_c[3];

+ dash_c[0] = '-';

+ dash_c[1] = 'c';

+ dash_c[2] = '\0';

+ gchar *args[] = { (gchar*)sh_path, (gchar*)dash_c, cmd, NULL };

+ li_worker_spawn(args, NULL);

+ }

+}

There is no such /bin/sh static string, so many LLMs failed to detect this backdoor.

Using Dr. Binary, the backdoor function is obvious to LLMs, so it can be detected easily: https://drbinary.ai/chat/d0927cd1-4c36-46a0-828f-9ab196a4ccd9

2. FUN_00131050 (backdoor, 0x131050)

This function extracts the Range header value as a string, then constructs an argv array and spawns a shell:

argv[0] = "/bin/sh" (bytes: 0x2f 0x62 0x69 0x6e 0x2f 0x73 0x68)

argv[1] = "-c" (bytes: 0x2d 0x63)

argv[2] = <Range header value>

What Changes

The cleanest way to describe the effect of the exhaustive analysis tool is in terms of which kinds of mistakes the model stops making.

Before exhaustive analysis, the dominant failure mode was the model failing to look in the right place. Verdicts were determined by what was visible in strings and in the few functions whose names sounded interesting. Anything buried in a function with a boring or compiler-mangled name was effectively invisible.

After exhaustive analysis, that failure mode largely goes away. The model no longer misses things by failing to look. The failures that remain are different in kind: they are cases where the model looked at the right code and still got the analysis wrong — usually because the task required deeper domain reasoning, multi-step exploit logic, or cross-binary comparisons that a single binary's call-graph cannot answer.

Those remaining failure modes are exactly what the rest of this series is about.

The Funnel Problem: What's Left After Filtering

Filtering thousands of functions down to a smaller suspicious set is progress. It is not the end of the work.

Even with the per-function filter doing its job, the output is often still a large set. A binary with several thousand functions can easily produce dozens, sometimes hundreds, of candidates marked as suspicious. The filter has narrowed the haystack — it has not reduced it to a single needle.

Handing that set wholesale to the higher-level reasoning model puts us right back where we started. The reasoning model is precisely the layer where token cost and attention cost matter most; if we hand it a hundred functions and ask it to consider all of them, it will do exactly what the naive vanilla agent did — focus on the few it finds most interesting and skim the rest. We will have moved the laziness one layer up, not eliminated it.

So the open question is what do you do with the filtered set? Candidate directions we are weighing:

- A second-pass filter with a more capable model, run per-function in the same single-function-parallel pattern. More expensive than nano, but applied to a much smaller set.

- Clustering and deduplication. Many flagged functions are variations on the same pattern — autogenerated wrappers around the same primitive, repeated boilerplate — and collapsing them reduces the set without losing information.

- Call-graph-aware ranking. A suspicious function unreachable from any entry point matters less than one sitting on the main request-handling path. Using the call graph to prioritize candidates before passing them up is cheap and informative.

- Letting the analyst do the final triage on a ranked list, rather than asking the LLM to do it for them.

We do not yet have a settled answer, and we suspect the right answer depends on the kind of question being asked. Backdoor detection, vulnerability triage, and behavioral diffing each want a different default. This is the layer of Dr. Binary where the most active design work is currently happening.

What This Doesn't Solve

Exhaustive analysis is necessary but not sufficient. A few honest limits worth being explicit about:

- The first-layer filter has tunnel vision. Because GPT-5-nano sees one function at a time, it has no context about callers or callees. It can flag a function for "missing" a critical check that is actually performed by the caller, miss a check-bypass vulnerability that only makes sense when you know what the callee does, or simply get distracted by a very long function. False positives and false negatives — especially on logic-heavy questions — survive this layer and have to be reconciled by the reasoning steps above it.

- Comprehensive coverage is not comprehensive understanding. Even when every function is indexed and surfaced cleanly, the upper layers can still misread a function, miss a subtle invariant, or fail to connect two pieces of evidence into a single conclusion.

- Some questions are not single-binary questions. A question like "what changed between this firmware version and the previous one?" cannot be answered by exhaustively analyzing one binary, no matter how exhaustively. It needs structure across binaries — which is its own design problem, and the subject of a future post.

- Heavily obfuscated or packed binaries push back. Exhaustive analysis is only as good as the disassembly and decompilation underneath it. Aggressive obfuscation, virtualization-based protection, and runtime-unpacked code reduce how much the indexer can actually surface.

- Indexing has a cost. Doing the survey up front, before the LLM is involved, is more expensive than letting the model peek at a few functions. We think this is the right trade-off — laziness is cheap and wrong, thoroughness is expensive and right — but it is a trade-off, not a free lunch.

What's Next in the Series

This post covered the most basic failure mode: the model not looking at enough of the binary. Solving it gets you a long way, but it leaves a second class of failures untouched — the ones that show up when binary analysis is fundamentally a comparison problem, not a single-binary-inspection problem. That is the subject of Part 2, where we walk through how Dr. Binary aligns functions across binaries — including across different architectures — and what that unlocks for cross-version diff analysis.

Later posts in the series will tackle the further failure modes that survive even good coverage and good alignment: the genuinely hard analytical work that we still consider open territory.